Brand Tracking Must Change. Moving from Merely Measuring to Mastering the Why

Why traditional brand tracking fails—and how multi-signal explanation changes what teams can do with insight.

The brand tracker that just keeps score isn’t enough anymore.

There’s a moment most insights leaders know well. The brand tracker lands. You open the deck. “Consideration” is down three points. “Awareness” held flat. “Net favorability” slipped a tick in two markets.

![]()

And then comes the question nobody can answer: “Why?”

That’s the core failure of traditional brand tracking. Not the measurement itself. We’ve gotten reasonably good at measuring as an industry. The failure is what happens after the brand tracker is distributed. The debrief turns into a debate. The brand team has a hypothesis. The comms team has a different one. The regional leads disagree with both. Everyone’s working from the same numbers and reaching different conclusions, because the numbers don’t actually explain anything. They only document. They don’t diagnose.

I’ve been in those rooms, on both sides of the table. First, as a client in insights and brand management at Campbell Soup Company, and then as a supplier here at Finch Brands. The honest truth is that most brand tracking, even from reputable partners, is fundamentally a scorekeeping exercise dressed up as strategy.

That’s a problem worth solving.

The Comfortable Illusions of Traditional Tracking

Ask most brand leaders what they want from their tracker and you’ll hear some version of the same answer: we want to understand what’s driving change and what to do about it.

Ask them what their tracker delivers and you’ll hear something different: scores over time, a wave report, a few charts comparing this year to last year.

That gap exists because most tracking programs were architected around a narrow idea of what measurement means. Survey the same questions to the same kinds of people on a consistent cadence and watch the numbers move. It’s a rigorous approach to a limited question. The assumption baked in is that the survey instrument is the complete story. That if something is true and important, it will show up in a five-point brand funnel and a handful of attribute batteries.

It won’t. Not reliably. Not fast enough. Not with enough context for your company to act on.

A fashion brand losing relevance with Gen Z doesn’t lose it in a tracker first. It loses it on TikTok, in street style commentary, months before awareness metrics start to twitch. A brand gaining consideration on a product innovation gets credit in the funnel scores eventually, but the tracker won’t tell you which attribute did the work, or whether it’s translating to actual sales velocity.

Traditional trackers are lagging indicators with no mechanism to explain themselves. For a brand operating in a fast-moving category, that’s a real strategic liability.

Measuring More Isn’t the Same as Knowing More

Part of the problem is that most trackers weren’t really built for your brand from the start. They were built from a generic template and adapted.

That manifests in a few ways. The attribute list measures things that sound relevant but weren’t derived from the specific drivers of loyalty and choice in your category. The metrics are industry-standard in a way that enables benchmarking but blurs the particular things that actually differentiate your brand. The competitive set gets locked in at kickoff, and then nobody updates it when a new entrant starts stealing share.

Worse, the whole instrument tends toward comprehensiveness at the expense of precision. More questions, more attributes, more segments to cut, until the bloated tracker is measuring everything and optimized for nothing. Long surveys mean fatigued respondents. Fatigued respondents mean data quality problems. More attributes mean everything looks equally important, which is analytically useless.

The right tracker for your brand isn’t just longer or shorter than a generic one. It’s structurally different. The measures should connect directly to the decisions your team makes. Every KPI should link to a business question, not just a report.

The Survey Can’t Explain Itself

If the goal isn’t scorekeeping but understanding, the architecture of tracking must change fundamentally. Survey data alone can’t get you there.

Think about what actually moves brand metrics. A competitor launches an aggressive price campaign. A cultural moment shifts the meaning of a category. A product quality issue surfaces in reviews and compounds through social conversation. None of these forces live in a survey instrument. The survey measures their downstream effect, with a lag.

Understanding why brand metrics move requires triangulating multiple signals simultaneously.

- The survey tells you what consumers say they think and feel.

- Driver analysis tells you which attributes are doing work beneath those scores.

- Social intelligence captures how cultural narratives are gaining or losing velocity in real time.

- Product reviews surface the lived brand experience in the consumer’s own words.

- Sales data closes the loop between perception and behavior.

No single source explains brand performance on its own. That’s what most tracking programs miss. They treat the survey as the authoritative source and everything else as supplemental color. The better architecture reverses that assumption: the survey is one input into a multi-signal explanation system. When something moves, you triangulate.

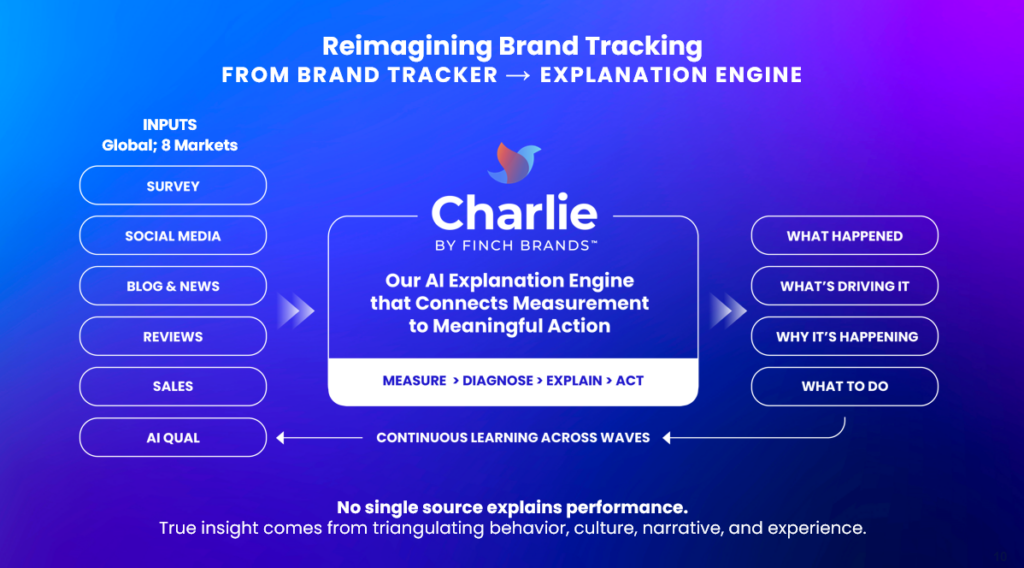

We think of this as building an explanation engine [see image below] rather than a measuring instrument. The difference matters. A measuring instrument tells you what happened. An explanation engine tells you why, and points to what should happen next.

From Knowing What to Knowing Why

Knowing metrics dropped doesn’t help brand teams act.

Knowing they dropped because emotional connection deteriorated among a key segment, driven by a gap between brand promise and product experience, validated by a pattern in recent reviews and a shift in social sentiment…that’s actionable! That’s the difference between a debrief that generates debate and one that generates a plan.

Getting to that level of diagnosis requires a few things traditional trackers typically skip.

#1 Smarter measurement.

Standard importance ratings on attribute batteries are one of the most overused and least useful tools in brand research. When you ask people how important something is, everything is important. Forced prioritization methods like MaxDiff make respondents choose, which surfaces true hierarchy in what drives loyalty versus what’s just table stakes. That difference is where strategy lives.

#2 Explicit driver modeling.

Not just correlation, but structural analysis that isolates what’s causing outcomes versus what’s moving alongside them. Knowing which levers move consideration and advocacy, rather than just correlate, changes where investment goes.

#3 Continuity without rigidity.

Longitudinal trend data is essential to protect. But “protecting trends” sometimes becomes an excuse to never evolve the instrument. The right approach is a disciplined core with locked KPIs paired with a light, flexible learning layer that goes deeper on priority diagnostic questions without touching the core. Keep the scoreboard intact. Do the diagnosis somewhere else.

The Cadence Question and How to Answer It

Most trackers run once or twice a year. That’s the industry default, and it has real methodological logic behind it: full samples, comprehensive instruments, meaningful trend intervals.

But it also means you’re flying mostly blind for six months at a time.

- Seasonality is invisible.

- Emerging competitive threats don’t register until they’ve already done damage.

- An unexpected shift after a brand moment gets picked up in retrospect, not in time to respond.

The right answer isn’t necessarily four deep waves a year. That’s expensive and, frankly, you’re unlikely to see enough genuine movement quarter over quarter in a stable tracking program to justify the cost. But a core plus pulse design with full diagnostic reads twice a year and lighter-touch KPI checks in between gives you the ability to detect early signals and course-correct without waiting six months to confirm what you already suspect.

The pulse waves aren’t meant to replace the core. They’re designed to fill the gaps. Track movement in key metrics, flag anything that warrants a closer look, feed the agenda for the next deep wave. Combined with an always-on social and review intelligence layer, that rhythm gives brand teams something much closer to continuous visibility than the traditional model allows.

The Explanation Layer You’re (Likely) Missing

Survey trackers have a built-in limitation that everyone acknowledges and almost nobody addresses structurally: they can tell you “that” something changed but they cannot tell you “why” in human terms. You can statistically model drivers. You can analyze attribute movement. But you still won’t know what it feels like to be a consumer whose relationship with the brand shifted.

The traditional solution is to run qualitative research alongside the tracker such as focus groups or IDIs. The problem is that this qual is usually disconnected from the tracker signals. It runs on a separate cadence, with a separate team, answering a separate research agenda. By the time the qual insights get synthesized and presented, the tracker has moved on.

A better architecture is qual that’s directly responsive to tracker movement. Not a fixed research agenda designed months in advance, but targeted exploration triggered by what the data is showing when it arrives.

- When a key driver shifts in an unexpected direction, that’s the question that goes into agile, AI-moderated video interviews.

- When social intelligence surfaces an emerging narrative that isn’t showing up yet in the survey, that’s what gets explored with real people in their own voice.

Qual as an evergreen explanation layer, rather than a periodic add-on, changes what tracking can deliver. It’s the difference between data and understanding at scale.

What a Better Approach Looks Like In Practice

At Finch Brands, we developed our approach to brand tracking from real client problems and real client feedback. One example occurred when working with Disney’s loyalty program. The client needed more than a periodic readout on member sentiment. They needed to understand why satisfaction moved wave to wave, for which members, and what was driving the change. Standard tracking wasn’t built for that question.

So, we built something different. We used an online community to maintain a consistent audience of loyalty program members, tracked NPS wave to wave at the individual level, and then dynamically identified members whose satisfaction had shifted to go deeper on ‘why’ through agile follow-up qual. The tracker and the explanation layer were integrated from the start.

The architecture we use now extends that logic across multiple signal sources.

- Survey data anchors the longitudinal story.

- Driver analysis, with both statistical modeling and MaxDiff prioritization, identifies what’s really driving outcomes, not just correlating with them.

- Social intelligence, analyzed through our Charlie by Finch Brands platform, captures how cultural narratives are shaping brand meaning between survey waves.

- Time-bound product review analysis at scale surfaces the consumer experience in real language tied to specific attributes.

- AI-moderated video qual goes deep on the questions the data raises. And where sales data is available, we close the loop between perception and behavior.

No single source explains performance. Actionable insight comes from triangulating truth.

The Last Mile Is Where Trackers Trip Up

Even when the research is good, it often fails at the last mile. Reports get too long. Slide counts balloon. The signal gets buried in documentation. Different stakeholders receive the same global report and draw different conclusions because nobody built a clear narrative that connects the numbers to decisions.

We’ve seen brilliant tracker data sit unused because the report didn’t give anyone a clear answer to the actual question: what do we do now?

Reporting for brand tracking should work from a clear principle: signal over volume. The executive summary shouldn’t be a restatement of every metric that moved. It should answer three questions, clearly, with enough context to act.

- What changed?

- Why did it change?

- What should we do about it?

Everything else is supporting documentation for the people who need to go deeper.

From Scoreboard to Strategy Engine

The shift we’re arguing for isn’t incremental. It’s a different conception of what tracking is for.

Traditional tracking is a system of record. You document brand health over time, protect the methodology, and generate a comparable body of evidence. That has value. But it’s not sufficient for the decisions brand teams face in the real world every day as the marketplace continues to accelerate and decision windows are compressed.

The new model for best-in-class tracking is a system of explanation. It doesn’t just tell you what moved; it tells you why, with enough convergent evidence from multiple sources that the answer is defensible. It doesn’t just report on the past; it surfaces early signals from social and experiential data that give teams a lead on what’s coming. It doesn’t just hand over a report; it integrates into the working rhythm of the brand team so that the insight is alive between waves, not just delivered twice a year.

That’s a harder thing to build. It requires a more sophisticated methodology, genuine integration across data sources, and a partner willing to do the analytical and narrative work that turns data into direction.

But for brands that are serious about brand-building as a strategic function, it’s the standard the work needs to meet now.

John Ferreira is Chief Insights & Innovation Officer at Finch Brands where he develops the firm’s innovative approaches to research and AI-powered insight. His perspective on brand tracking is shaped by genuine dual experience: he spent 11 years at Campbell Soup as a client on the receiving end of tracker reports and has spent the better part of a decade on the agency side designing better ones. Knowing what it feels like to sit in the debrief and wonder “so what do we do now?” is what drives the methodology behind this work. He holds an MBA from the Wharton School of Business.